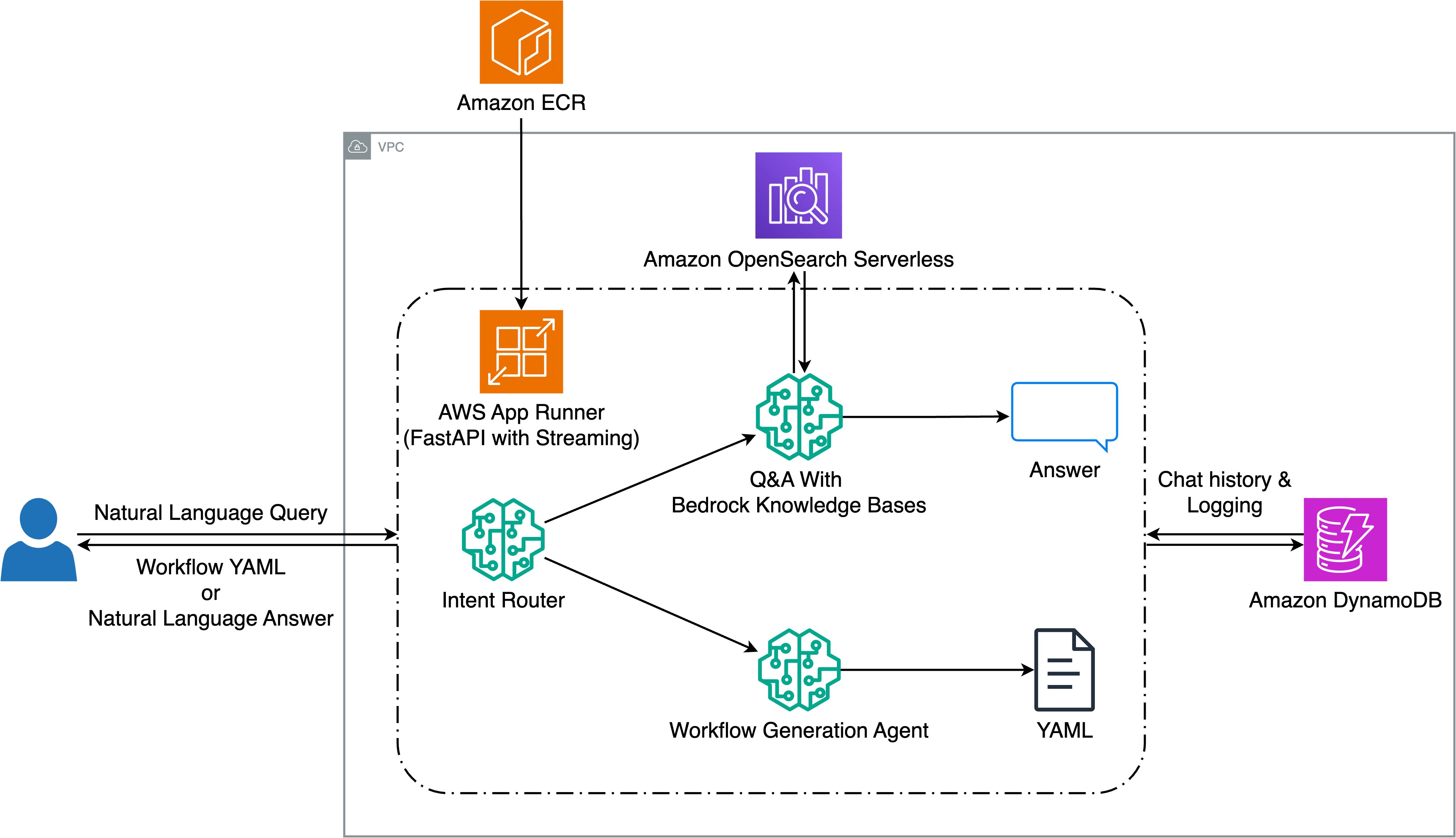

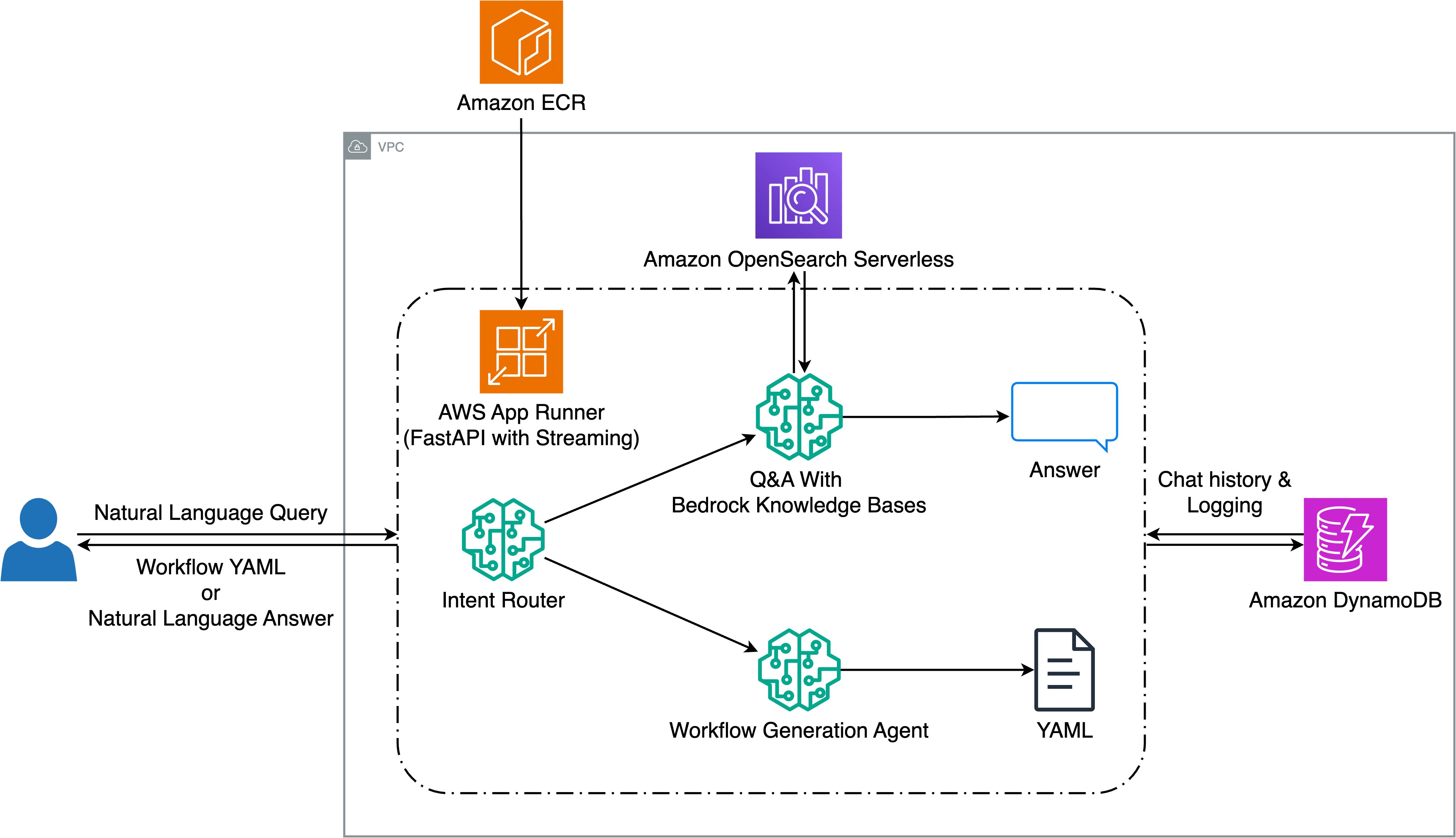

Halliburton enhances seismic workflow creation with Amazon Bedrock and Generative AI

In this post, we'll explore how we built a proof-of-concept that converts natural language queries into executable seismic workflows while providing a question-answering capability for Halliburton's Seismic Engine tools and documentation. We'll cover the technical details of the solution, share evaluation results showing workflow acceleration of up to 95%, and discuss key learnings

The Download: AI malaise and babymaking tech

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. We’ve entered the era of AI malaise AI is spreading everywhere, and it is not going away. But what will it do? What effect will it have on our society? Will…

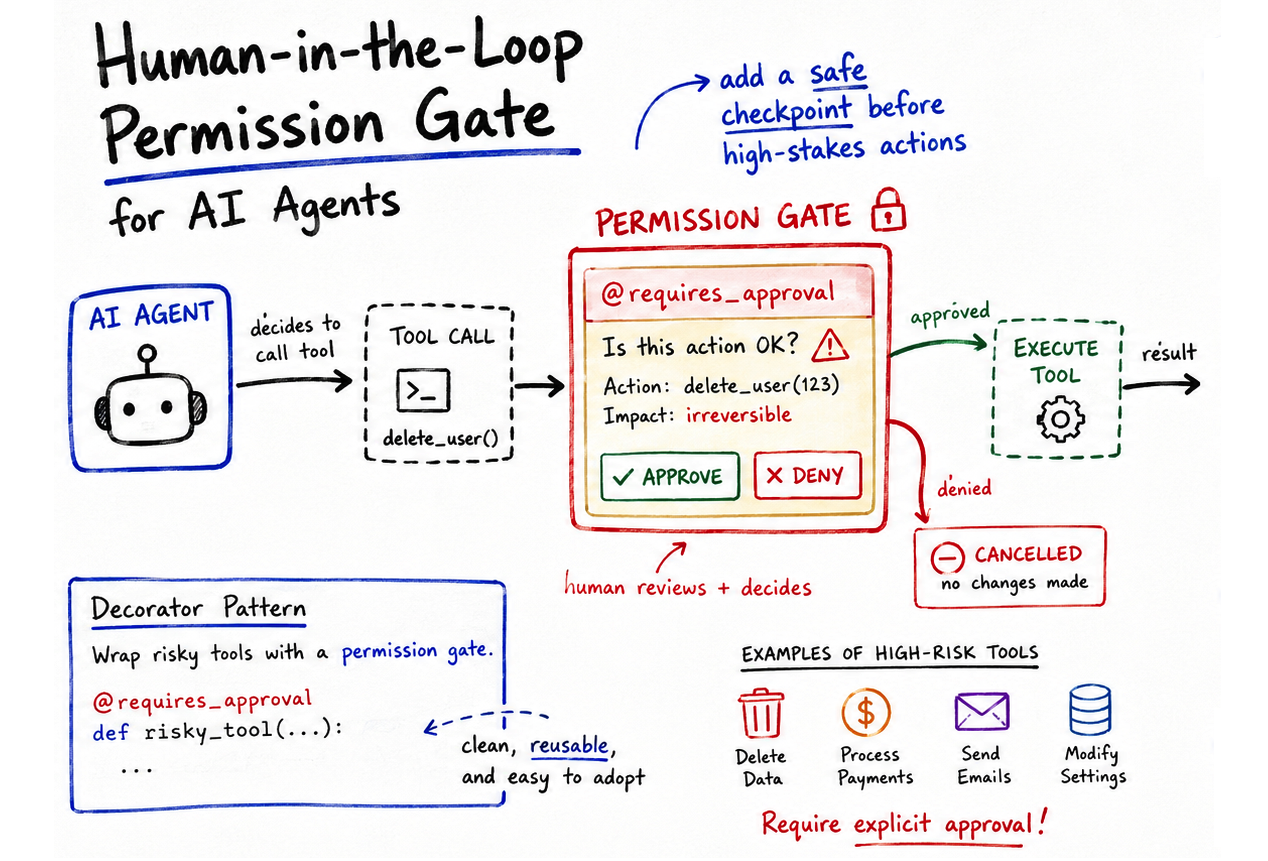

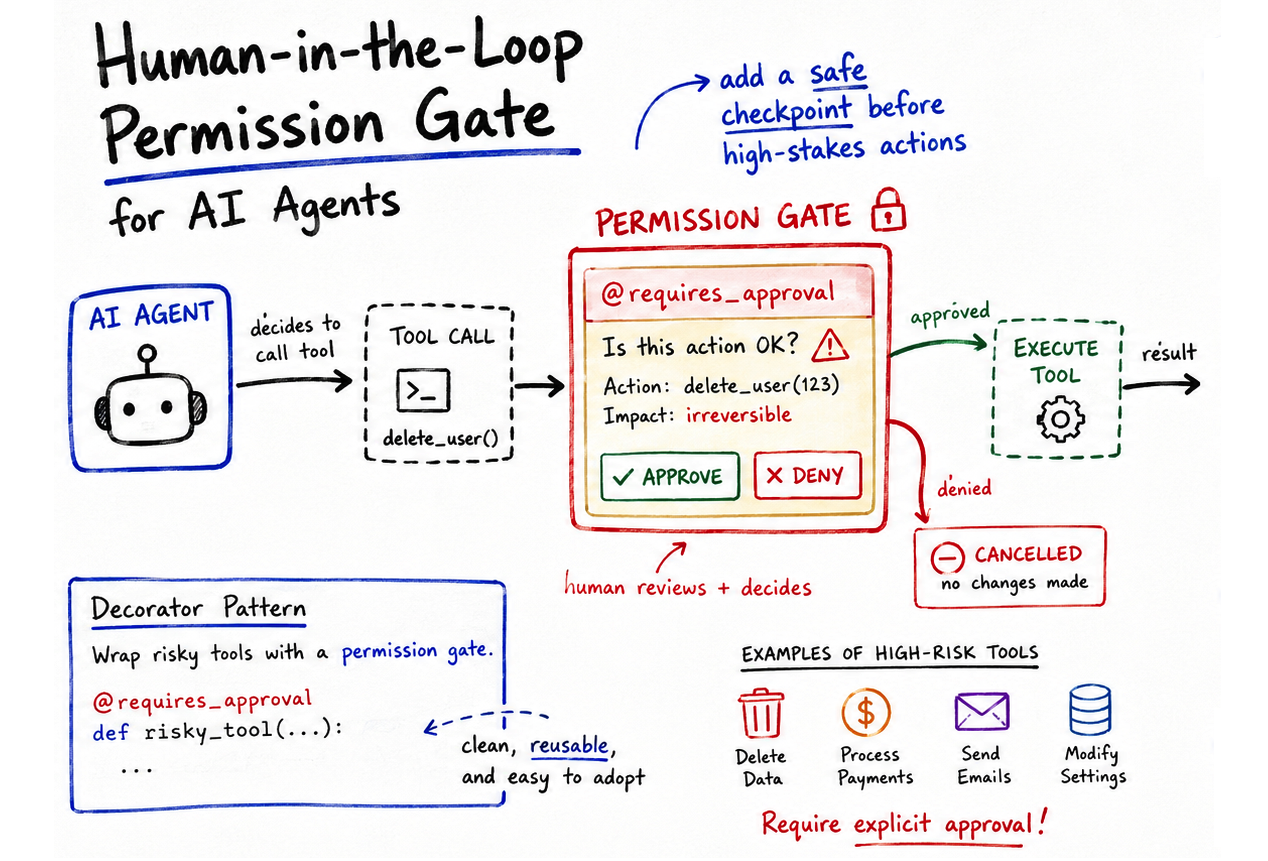

Implementing Permission-Gated Tool Calling in Python Agents

AI agents have evolved beyond passive chatbots.

Anthropic Introduces Natural Language Autoencoders That Convert Claude’s Internal Activations Directly into Human-Readable Text Explanations

When you type a message to Claude, something invisible happens in the middle. The words you send get converted into long lists of numbers called activations that the model uses to process context and generate a response. These activations are, in effect, where the model’s “thinking” lives. The problem is nobody can easily read them. […]

The post Anthropic Introduces Natural Language Autoencoders T

LightSeek Foundation Releases TokenSpeed, an Open-Source LLM Inference Engine Targeting TensorRT-LLM-Level Performance for Agentic Workloads

Inference efficiency has quietly become one of the most consequential bottlenecks in AI deployment. As agentic coding systems such as Claude Code, Codex, and Cursor scale from developer tools to infrastructure powering software development at large, the underlying inference engines serving those requests are under increasing strain. The LightSeek Foundation researchers have released TokenSpeed, an

Powering the Next American Century: US Energy Secretary Chris Wright and NVIDIA’s Ian Buck on the Genesis Mission

AI will help build the energy it needs. That’s the case U.S. Energy Secretary Chris Wright and NVIDIA Vice President of Hyperscale and High-Performance Computing Ian Buck made Thursday morning at the SCSP AI+ Expo. The 30-minute fireside chat, moderated by SCSP president Ylli Bajraktari, was called “Powering the Next American Century.” Their argument: American […]

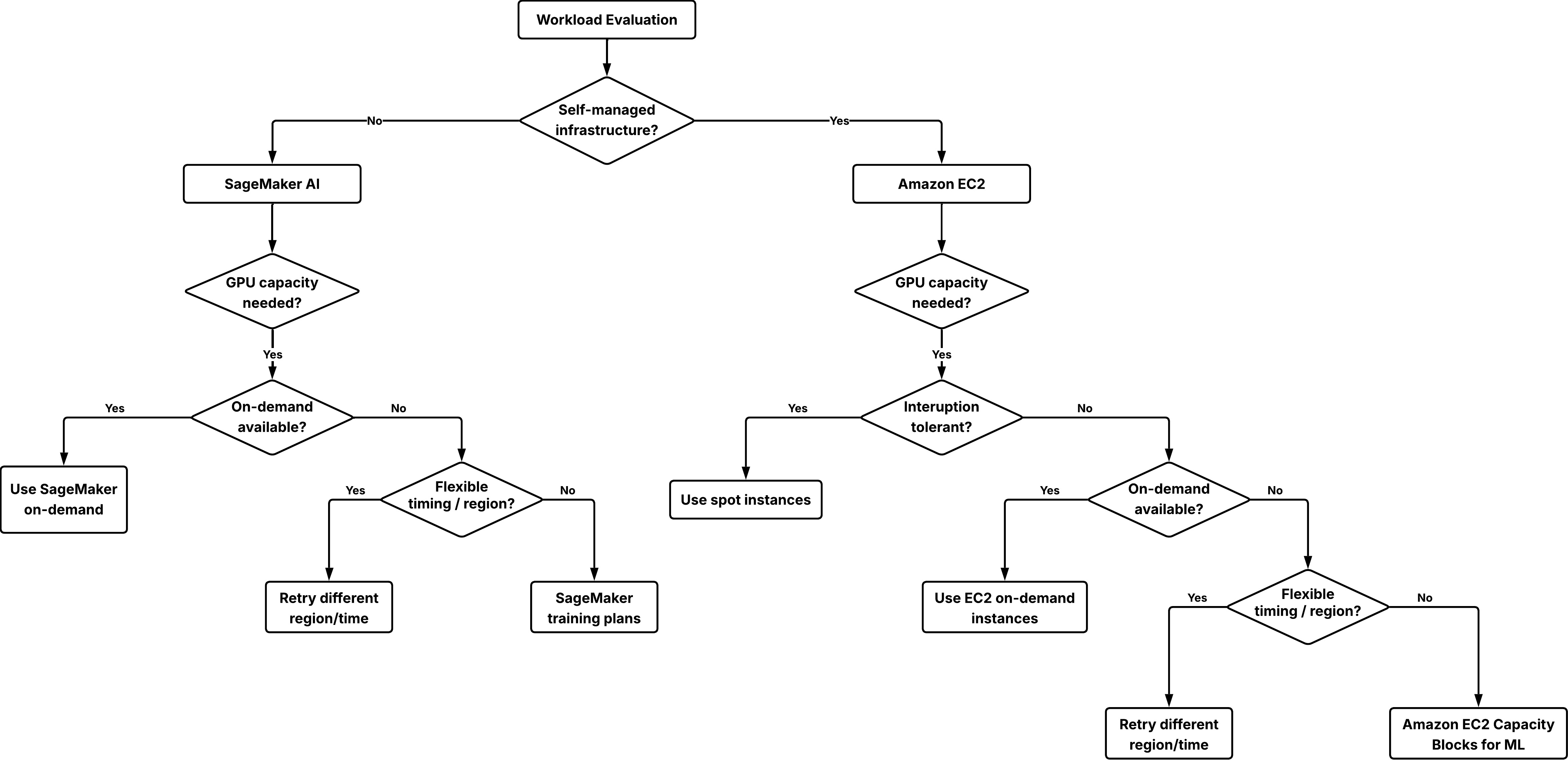

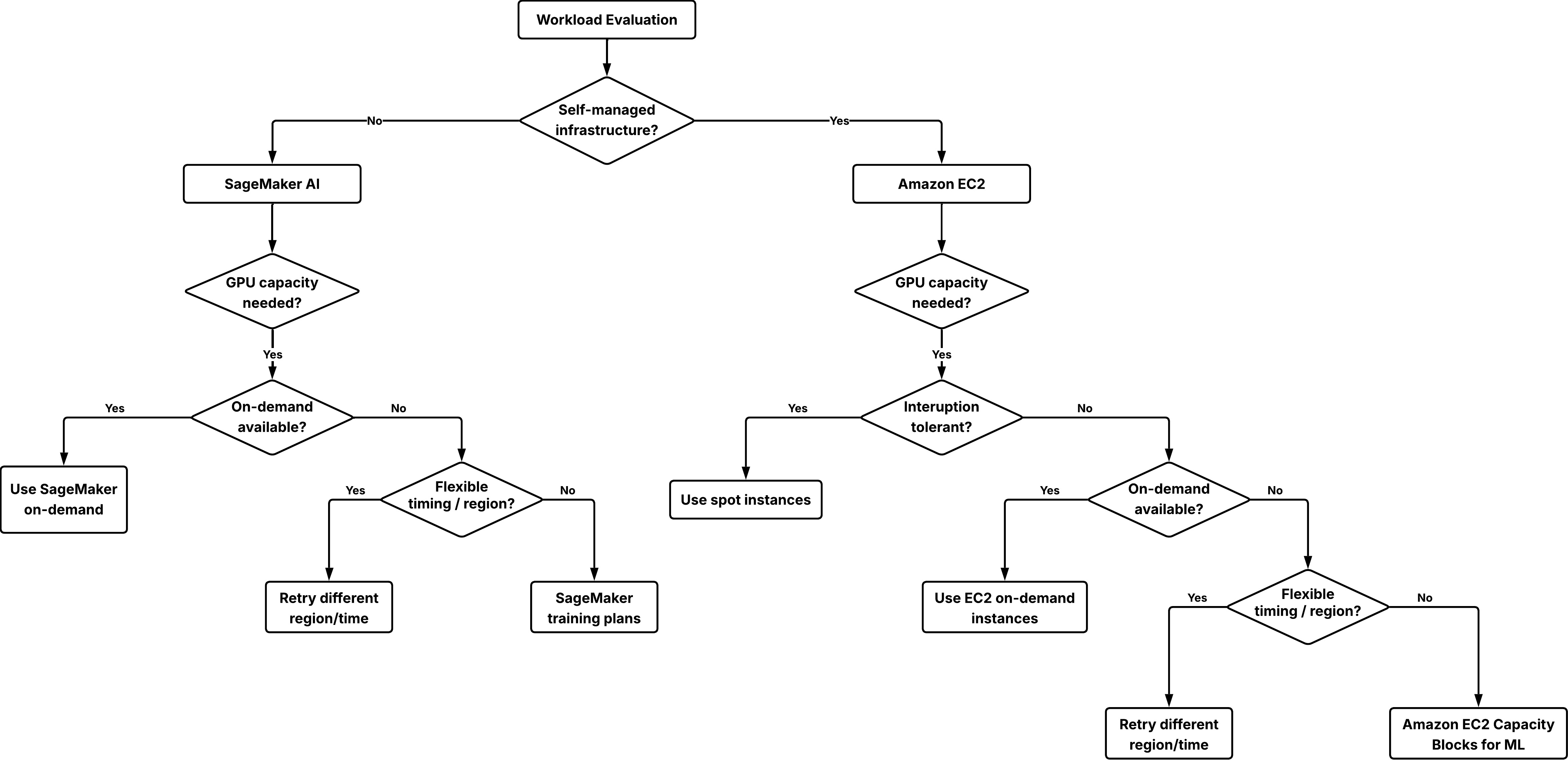

Secure short-term GPU capacity for ML workloads with EC2 Capacity Blocks for ML and SageMaker training plans

In this post, you will learn how to secure reserved GPU capacity for short-term workloads using Amazon Elastic Compute Cloud (Amazon EC2) Capacity Blocks for ML and Amazon SageMaker training plans. These solutions can address GPU availability challenges when you need short-term capacity for load testing, model validation, time-bound workshops, or preparing inference capacity ahead of a release.

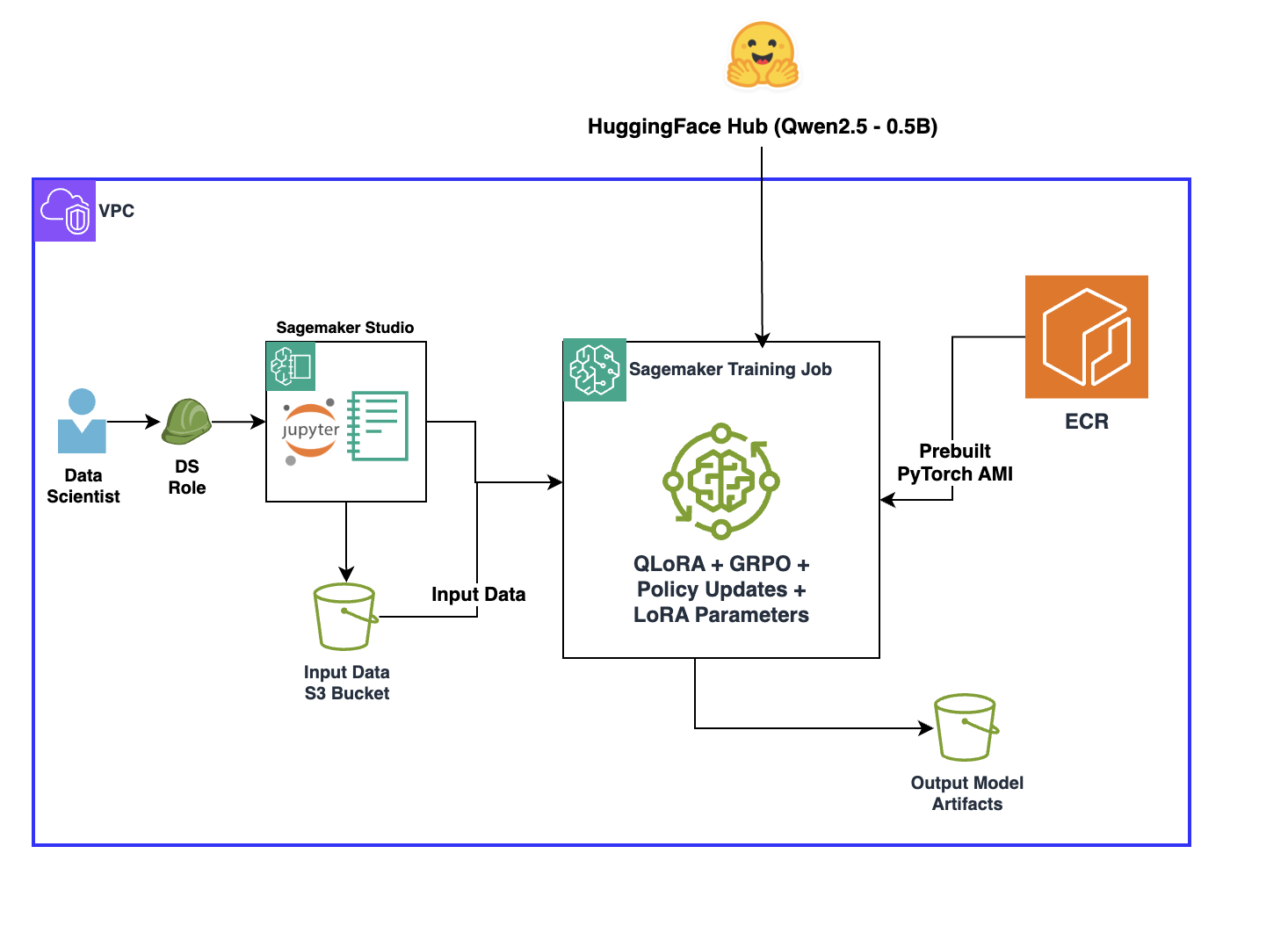

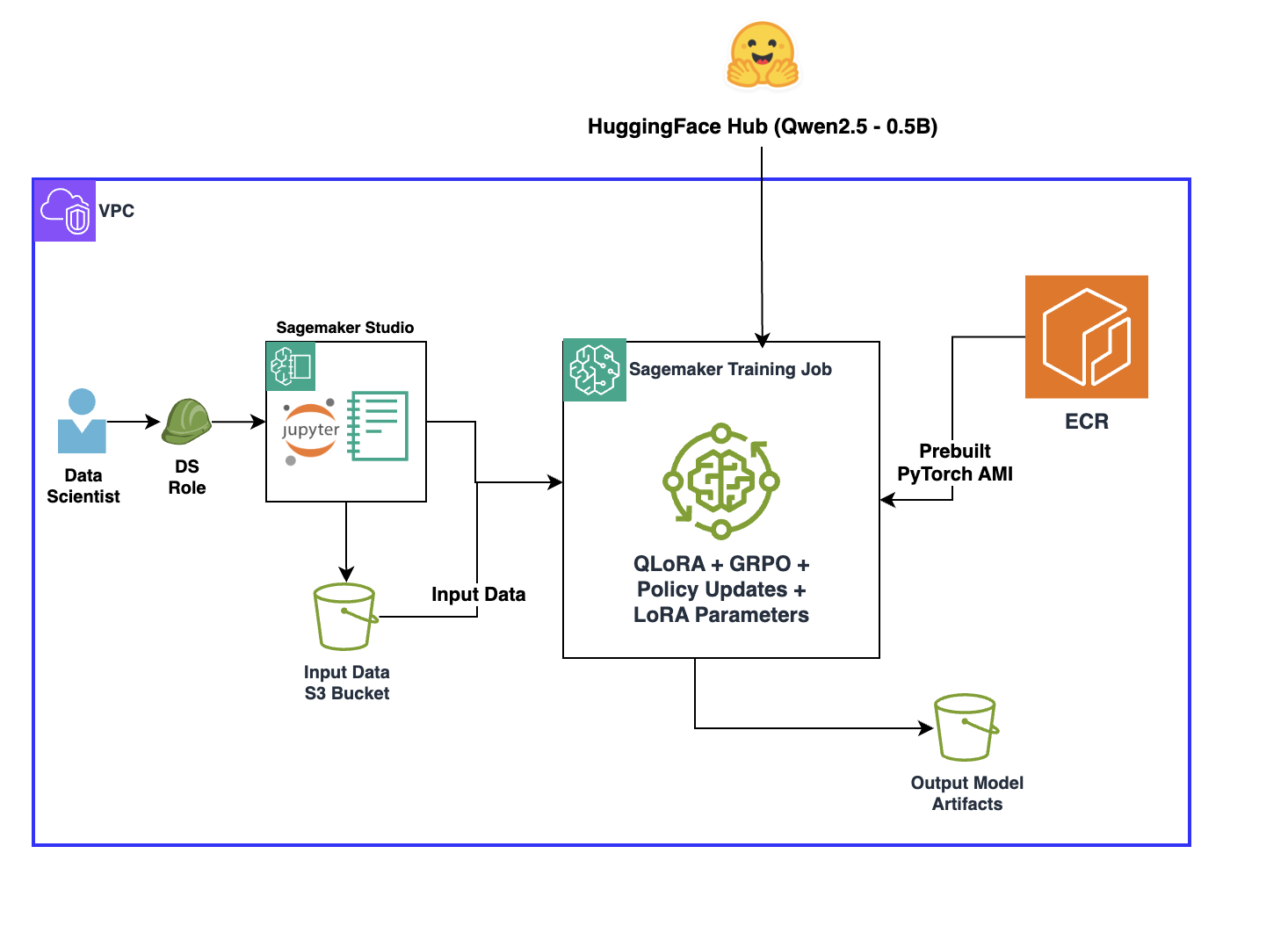

Overcoming reward signal challenges: Verifiable rewards-based reinforcement learning with GRPO on SageMaker AI

In this post, you will learn how to implement reinforcement learning with verifiable rewards (RLVR) to introduce verification and transparency into reward signals to improve training performance. This approach works best when outputs can be objectively verified for correctness, such as in mathematical reasoning, code generation, or symbolic manipulation tasks. You will also learn how to layer tech

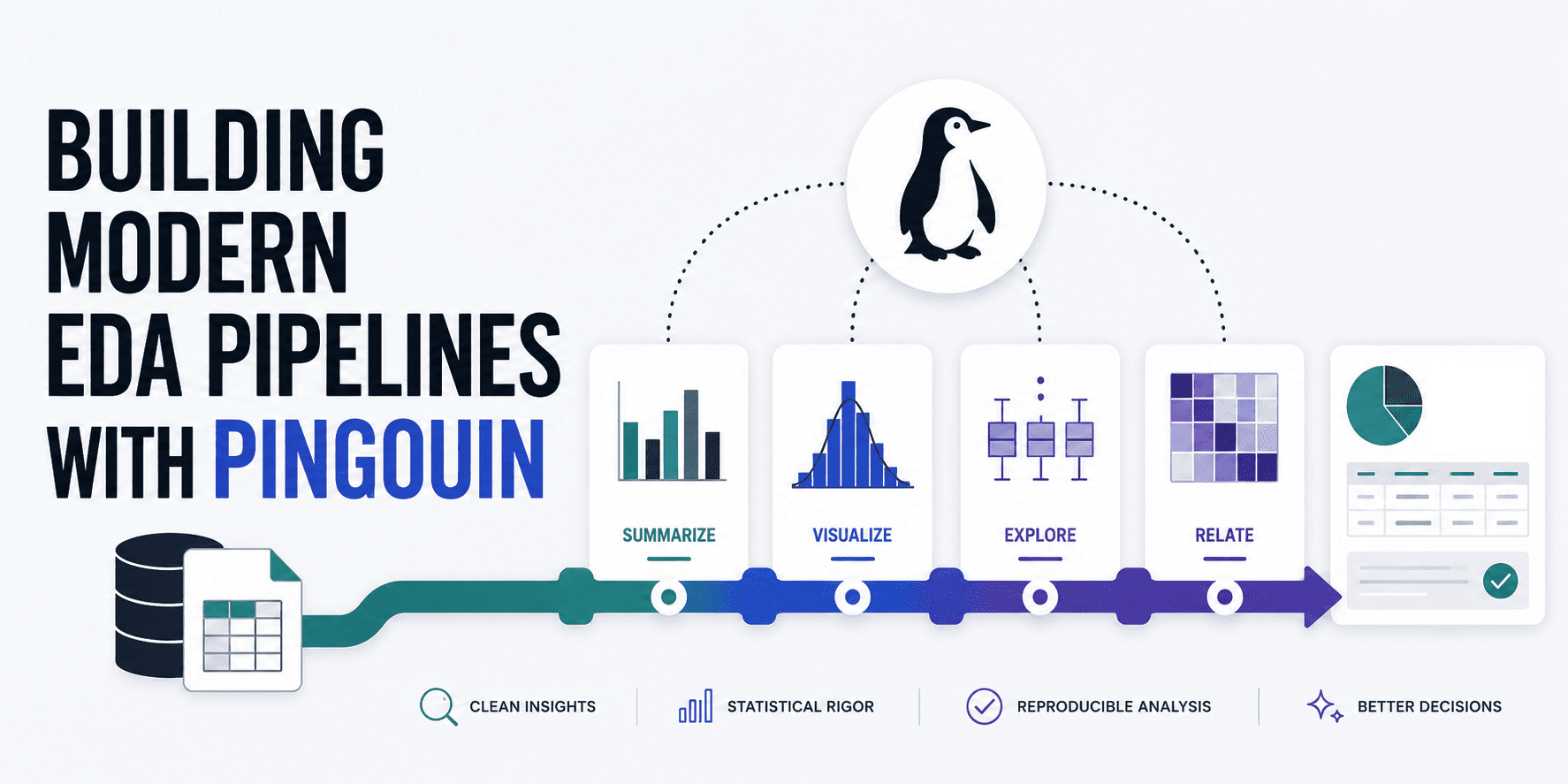

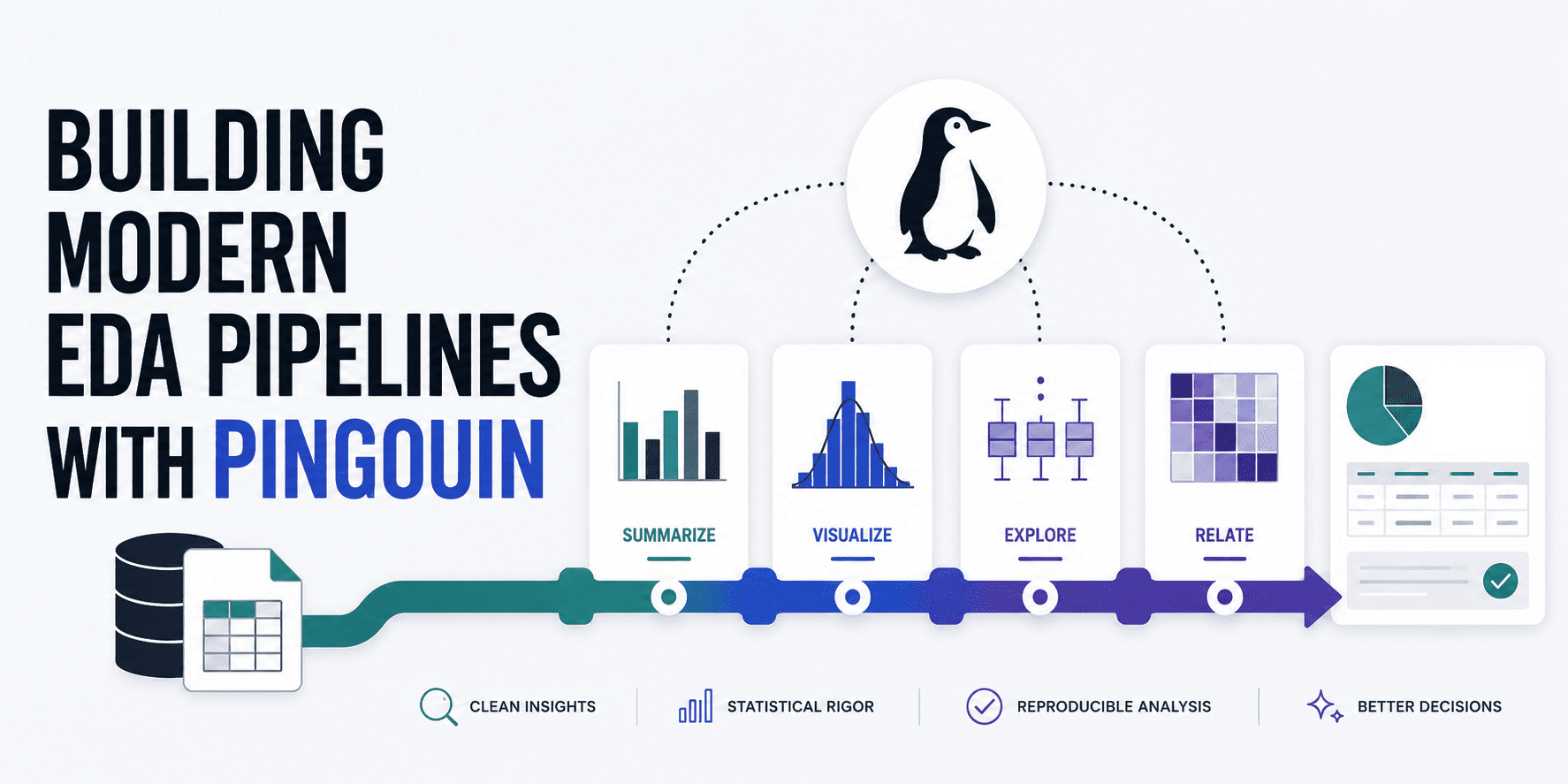

Building Modern EDA Pipelines with Pingouin

Learn how to build a holistic pipeline for rigorous, statistical EDA, validating several important data properties.

Linked and Loaded: Gaijin Single Sign-On Now Available on GeForce NOW

Less typing, more tanking. Faster logins mean more time in the gaming action — and this week provides GeForce NOW members with a smoother path straight into the battlefield. Cloud gaming is all about instant access to titles across devices, and the latest GeForce NOW update removes another layer for members jumping into their Gaijin […]

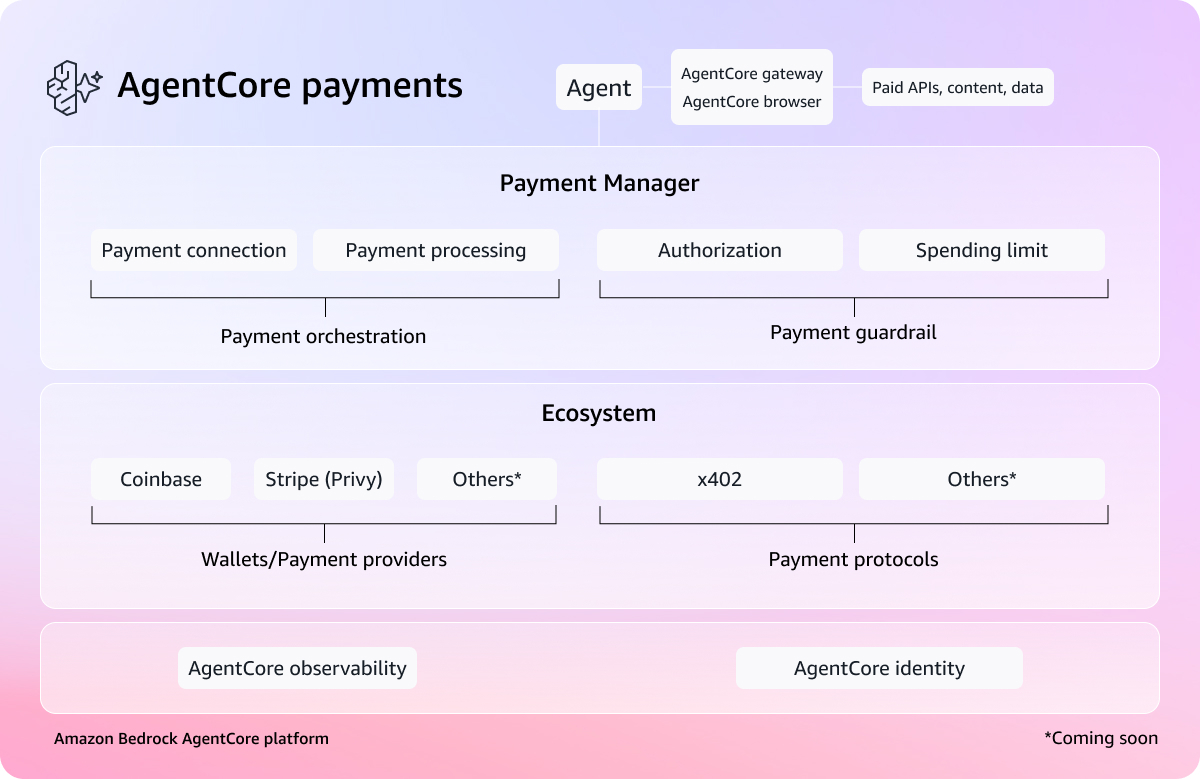

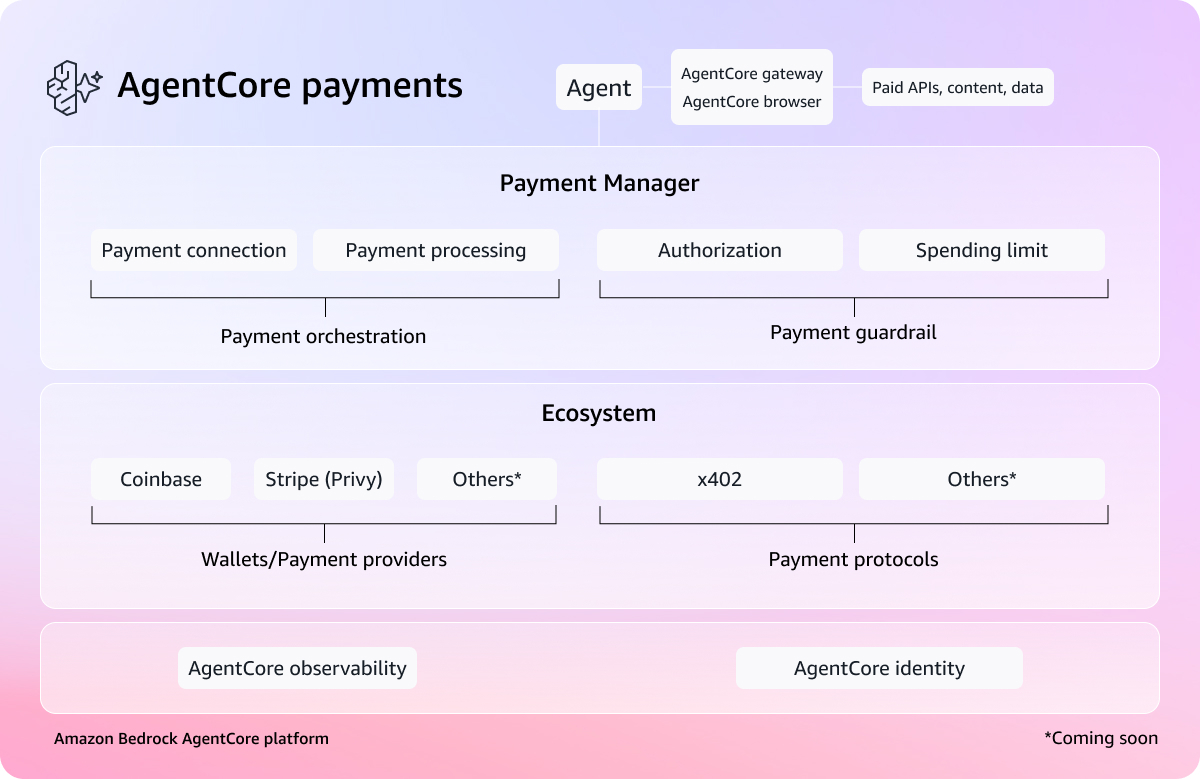

Agents that transact: Introducing Amazon Bedrock AgentCore payments, built with Coinbase and Stripe

Today, we're announcing a preview of Amazon Bedrock AgentCore Payments, a new set of features in Amazon Bedrock AgentCore that enables AI agents to instantly access and pay for what they use. AgentCore Payments was developed in partnership with Coinbase and Stripe.

The Download: the tech reshaping IVF and the rise of balcony solar

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. What’s next for IVF IVF has brought millions of babies into the world over the last four decades. But the process can still be slow, painful, and expensive—and far from guaranteed…

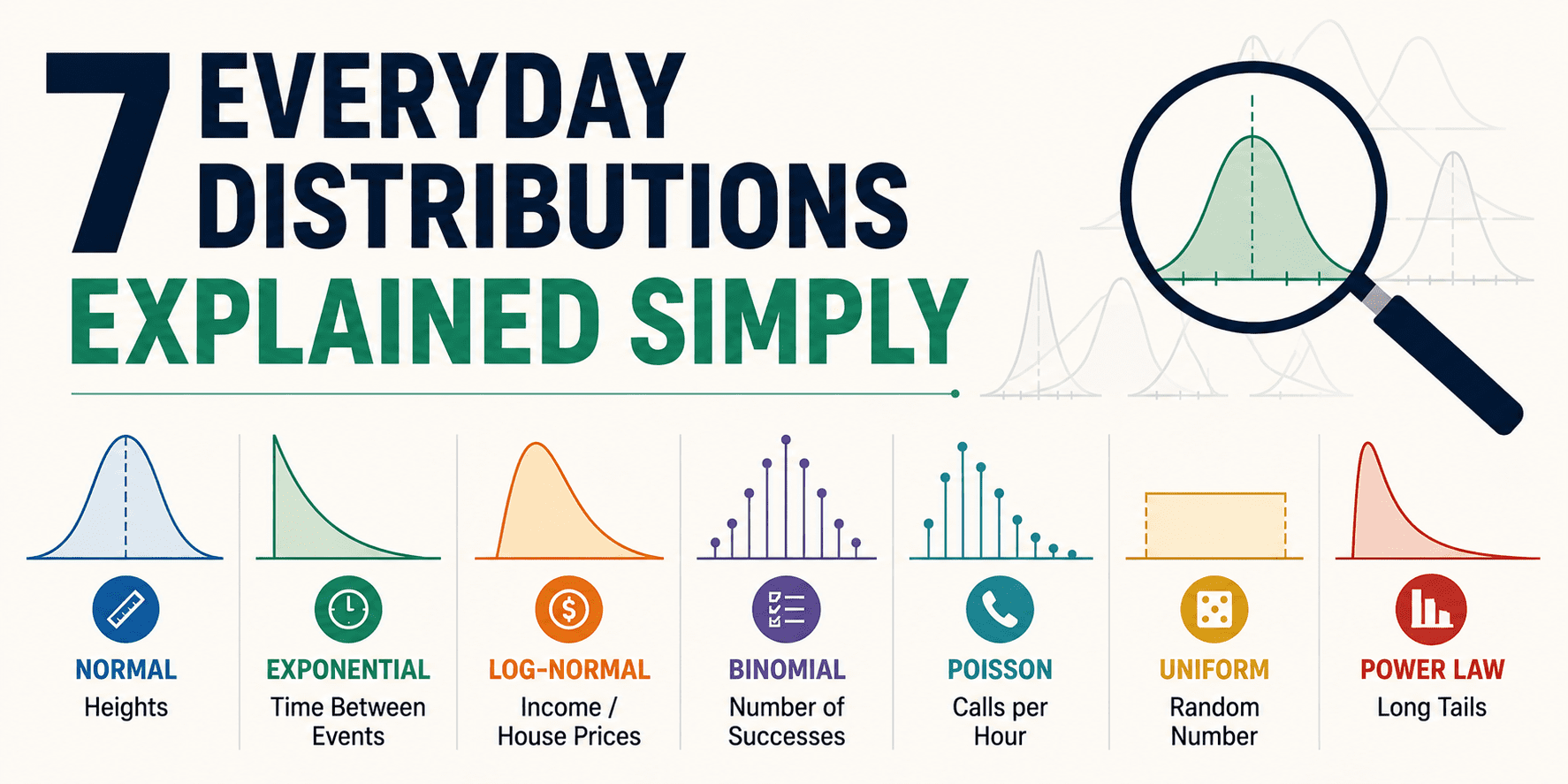

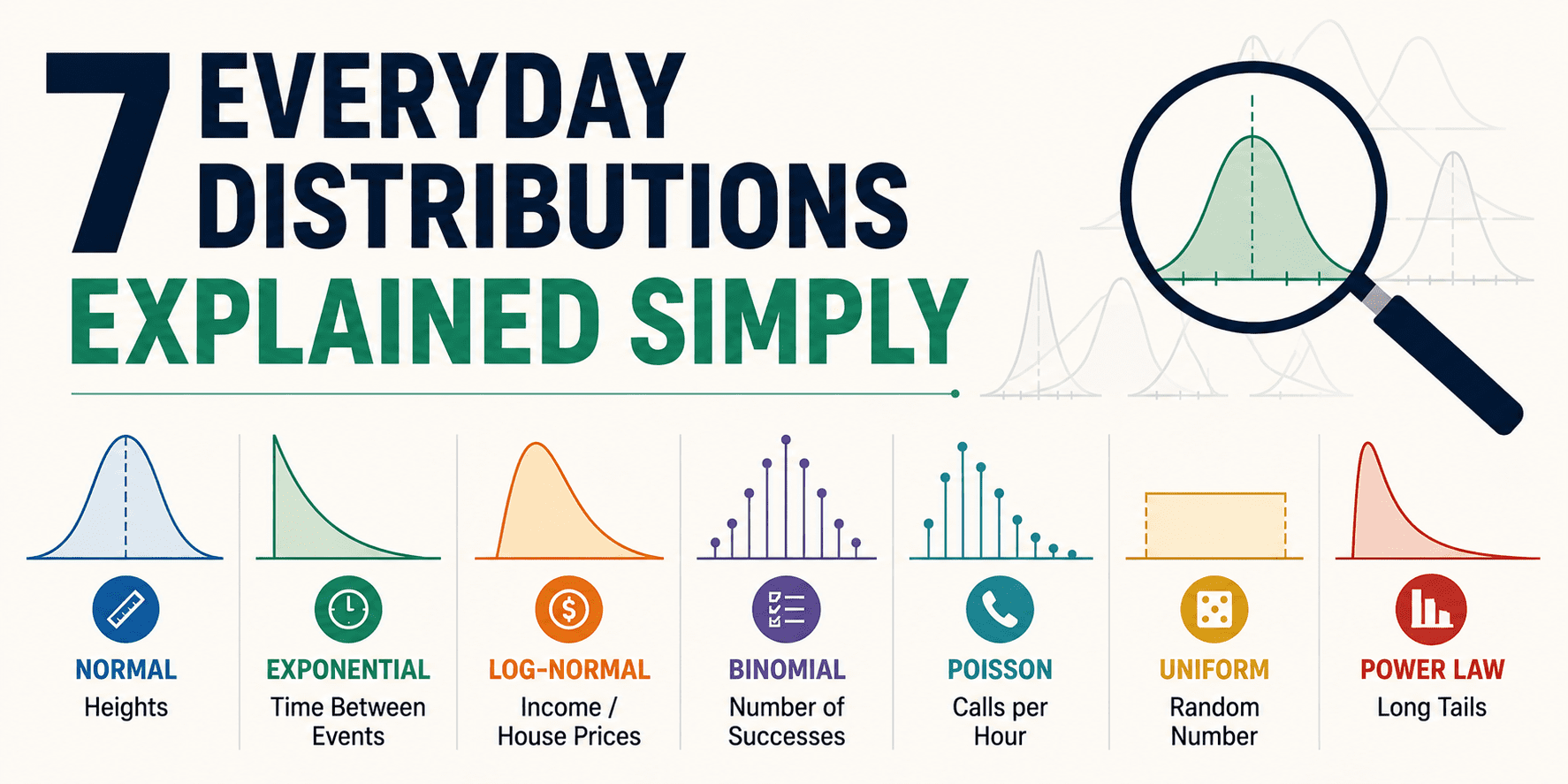

7 Everyday Distributions Explained Simply

This article is a quick, everyday tour of seven distributions you'll actually recognize once you know what to look for. No heavy math. No gatekeeping.

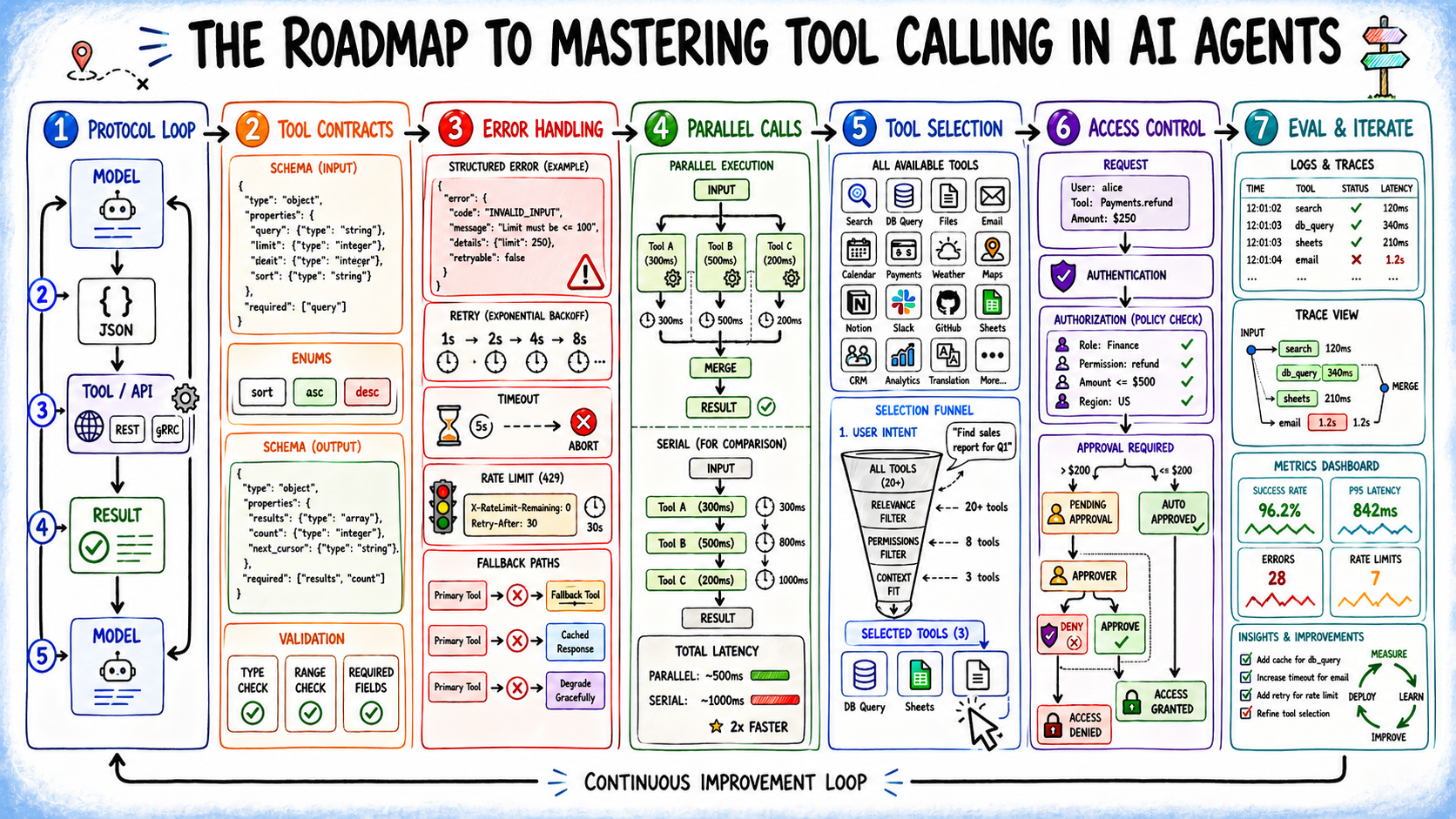

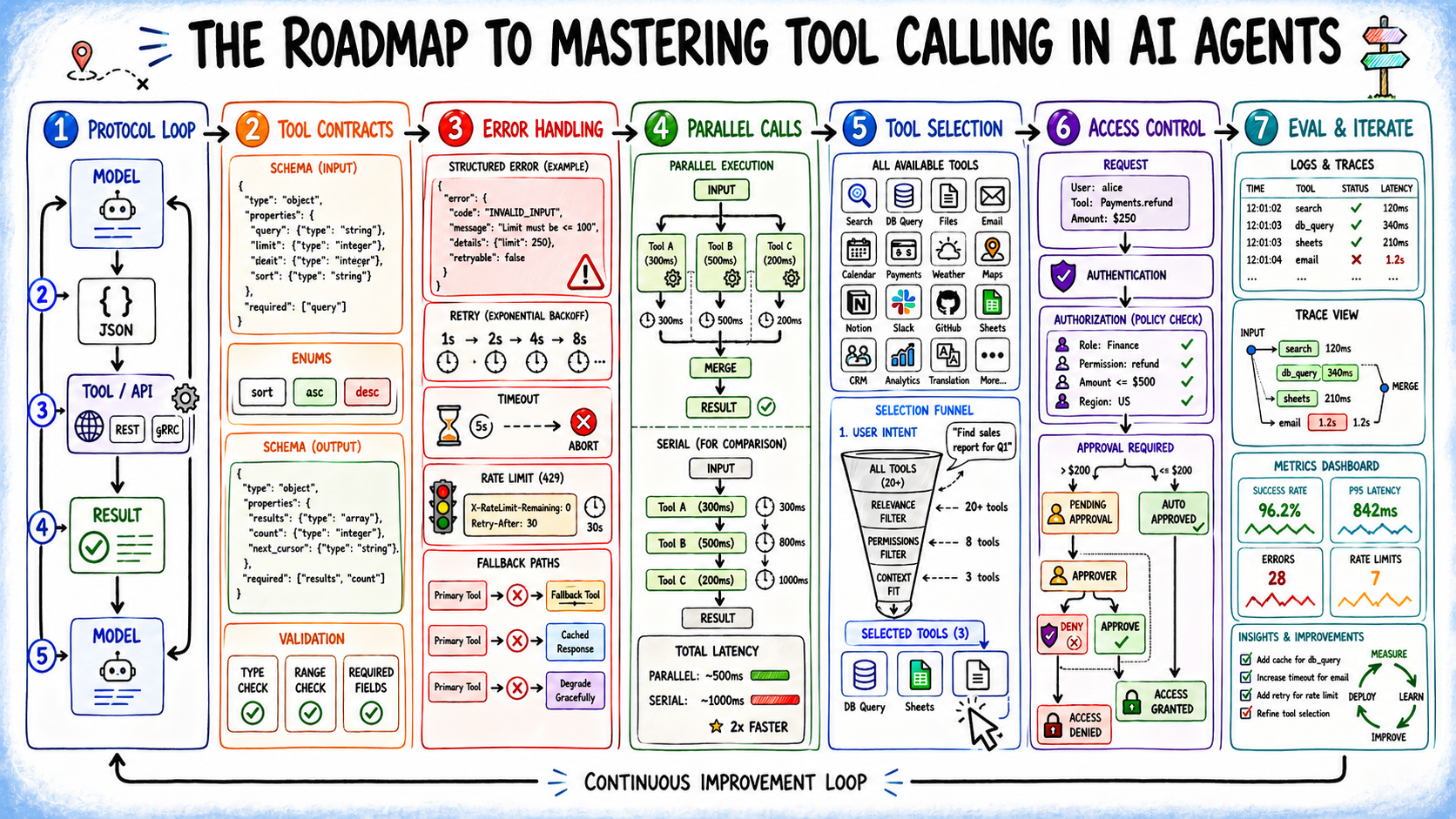

The Roadmap to Mastering Tool Calling in AI Agents

Most <a href="https://www.

What’s next for IVF

MIT Technology Review’s What’s Next series looks across industries, trends, and technologies to give you a first look at the future. You can read the rest of them here. Forty-eight years ago this July, Louise Joy Brown became the world’s first person born with the help of in vitro fertilization. Millions more IVF babies have entered…

Meta AI Releases NeuralBench: A Unified Open-Source Framework to Benchmark NeuroAI Models Across 36 EEG Tasks and 94 Datasets

Meta AI team has released NeuralBench, a unified open-source framework for benchmarking NeuroAI models, alongside NeuralBench-EEG v1.0 — the largest open EEG benchmark to date, covering 36 tasks, 94 datasets, and 14 deep learning architectures evaluated under a single standardized interface across 9,478 subjects and 13,603 hours of brain recordings.

The post Meta AI Releases NeuralBench: A Unified

Zyphra Releases ZAYA1-8B: A Reasoning MoE Trained on AMD Hardware That Punches Far Above Its Weight Class

Zyphra releases ZAYA1-8B, a reasoning Mixture of Experts model with only 760M active parameters that outperforms open-weight models many times its size on math and coding benchmarks — closing in on DeepSeek-V3.2 and surpassing Claude 4.5 Sonnet on HMMT'25 with its novel Markovian RSA test-time compute method. Trained end-to-end on AMD Instinct MI300 hardware and released under Apache 2.0, it

CopilotKit Introduces Enterprise Intelligence Platform That Gives Agentic Applications Persistent Memory Across Sessions and Devices

CopilotKit Intelligence adds a managed persistence layer on top of the open-source CopilotKit stack, giving agents the ability to retain context, state, and interaction history without custom storage infrastructure

The post CopilotKit Introduces Enterprise Intelligence Platform That Gives Agentic Applications Persistent Memory Across Sessions and Devices appeared first on MarkTechPost.

Abacus AI Review: Features, AI Agents & Automation Explained (Honest Guide)

A detailed Abacus AI review covering ChatLLM, Abacus AI Agent, Claw, automation, app building, image and video generation, pricing, pros, cons, and who should use it.

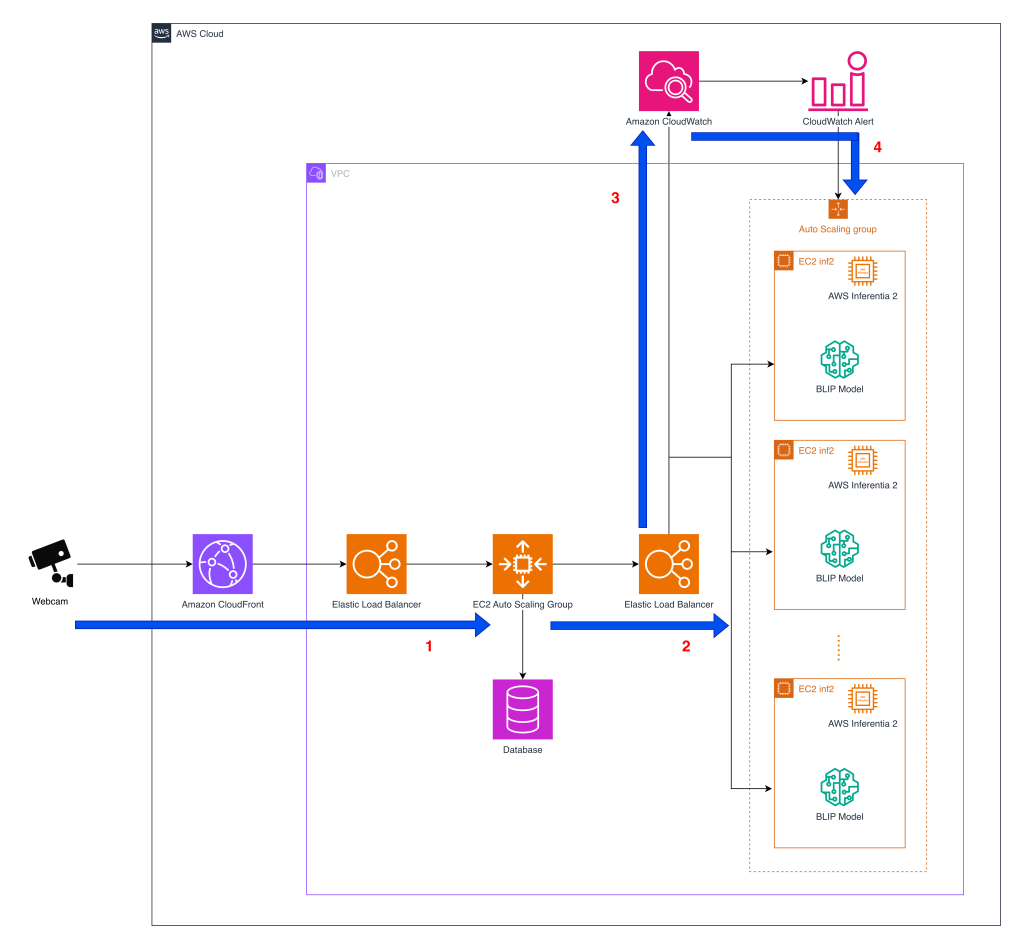

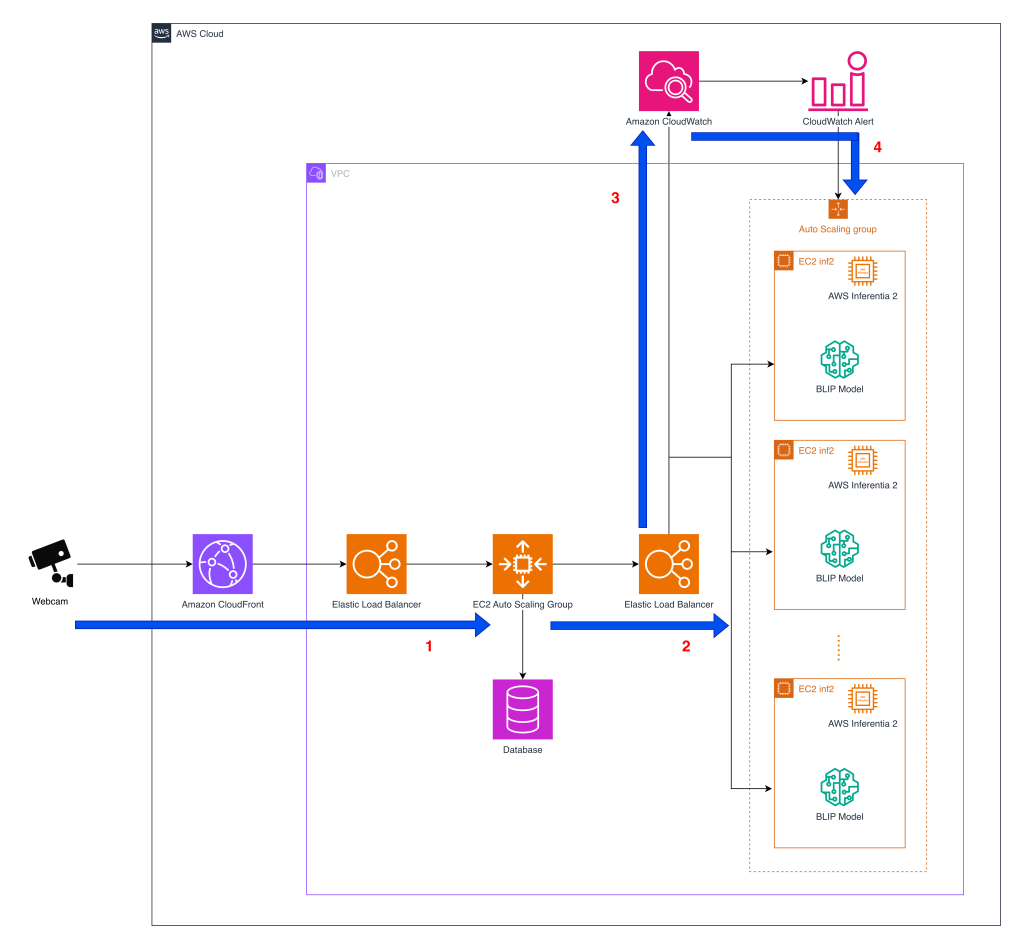

Cost effective deployment of vision-language models for pet behavior detection on AWS Inferentia2

Tomofun, the Taiwan-headquartered pet-tech startup behind the Furbo Pet Camera, is redefining how pet owners interact with their pets remotely. To reduce costs and maintain accuracy, Tomofun turned to EC2 Inf2 instances powered by AWS Inferentia2, the Amazon purpose-built AI chips. In this post, we walk through the following sections in detail.